SQL Series – This series aim to make sure you are able to learn and understand at least 100 of the most asked concepts in SQL interviews.

Today is Day 3 of the SQL Series – SQL Query Optimization and Data Modeling Interview Questions

Bookmark this page, and make sure to be consistent in it. Also, get a notebook and make notes of each question/concept put in here

We have created this series to make you practice and learn ~120 Most Asked SQL interview Questions

Day 1 – Basic SQL Questions + Most Asked SQL Interview Questions

Day 2 – JSON, Date and Time SQL Interview Questions

Day 3 – SQL Query Optimization and Data Modeling Interview Questions

Day 4 – Transactions and Concurrency

Day 5 – SQL Theory + SQL Hard Interview Questions

Day 6 – Advanced SQL Aggregation + Advanced Joins

Day 7 – Error Handling & Debugging + Stored Procedures & CTE

Day 8 – Complex Data Handling

Day 9 – Data Modeling

Day 10 – SQL Most Asked Query Interview Questions

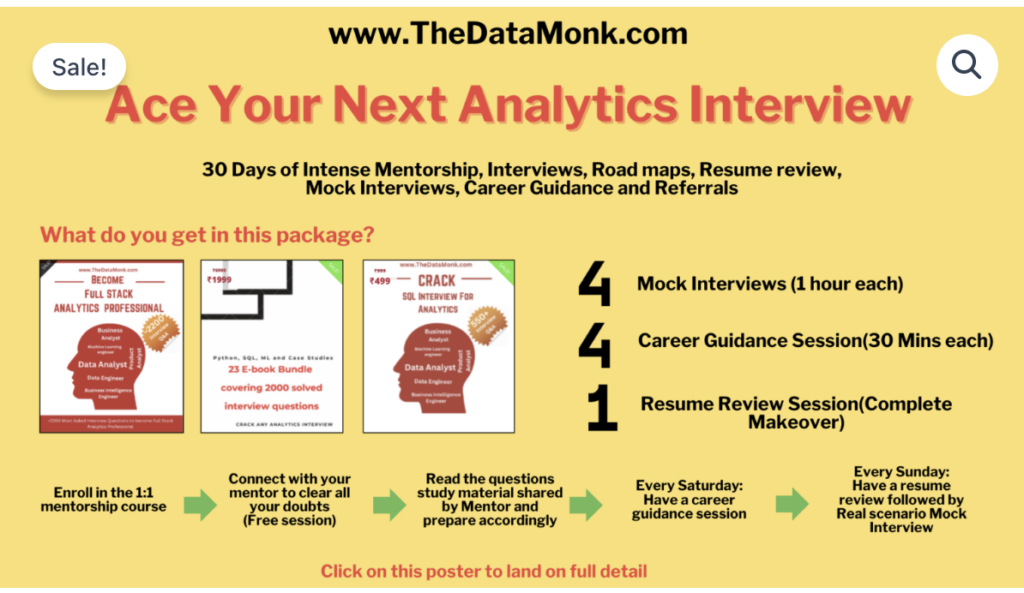

The Data Monk Best Seller Books and Services

1. Become a Full Stack Analytics Professional in 2026 – 2200 Interview Questions covering 23 topics

2. Book mock interviews/Career Guidance Session on Top Mate to practice

And below is our master class

1 Month Complete 1:1 Mentorship along with 3 e-books, 4 mock interviews, 4 career guidance sessions, and a complete resume review

You can also go through our The Data Monk’s Youtube Channel with 160+ videos across all the top interview topics

Table of Contents

- Introduction

- What is SQL Query Optimization

- SQL Query Optimization Techniques

- Data Modeling Fundamentals

- FAQ

- Conclusion

Introduction

In modern data-driven organizations, the ability to write efficient SQL queries and design optimal database schemas is crucial for performance, scalability, and cost-efficiency. Whether you’re a data analyst, database engineer, or full-stack developer, mastering SQL query optimization and data modeling can significantly improve your system’s performance.

SQL Query Optimization and Data Modeling Interview Questions

This comprehensive guide covers:

- SQL query optimization techniques with real-world examples

- Data modeling strategies to structure your databases effectively

- Common mistakes to avoid

- Best practices used by top companies

What is SQL Query Optimization?

SQL query optimization is the process of improving the performance of SQL queries by reducing execution time, minimizing resource consumption (CPU, memory, disk I/O), and decreasing the overall load on the database server.

Why SQL Query Optimization Matters

- Faster application response times – Users experience better performance

- Reduced infrastructure costs – Less CPU and memory usage

- Better scalability – Handle more concurrent users

- Improved user experience – Reduce page load times

- Cost savings – Especially important for cloud databases that charge per query

SQL Query Optimization Techniques: Detailed Solutions

Question 1: What is Query Execution Plan and How to Use It for Optimization?

Question

In a large e-commerce database with millions of orders, your inventory query is taking 45 seconds to return results. How would you use the query execution plan to identify the bottleneck and optimize the query?

Detailed Answer

A query execution plan (also called execution plan or query plan) is a visual representation of the steps the database engine takes to execute a SQL query. It shows:

- Which tables are being scanned

- Whether indexes are being used

- Join operations and their efficiency

- Cost estimates for each operation

Step-by-Step Approach:

Step 1: Get the Execution Plan

For SQL Server:

sql

SET STATISTICS IO ON;

SET STATISTICS TIME ON;

SELECT o.order_id, o.order_date, p.product_name, inv.quantity

FROM orders o

JOIN order_items oi ON o.order_id = oi.order_id

JOIN products p ON oi.product_id = p.product_id

JOIN inventory inv ON p.product_id = inv.product_id

WHERE o.order_date >= '2024-01-01';

SET STATISTICS IO OFF;

SET STATISTICS TIME OFF;For PostgreSQL:

sql

EXPLAIN ANALYZE

SELECT o.order_id, o.order_date, p.product_name, inv.quantity

FROM orders o

JOIN order_items oi ON o.order_id = oi.order_id

JOIN products p ON oi.product_id = p.product_id

JOIN inventory inv ON p.product_id = inv.product_id

WHERE o.order_date >= '2024-01-01';Step 2: Analyze the Plan – Look For:

- Seq Scan (Sequential Scan) – Red flag! The database is scanning entire tables

- Index Scan vs Index Seek – Seek is better (more efficient)

- Cost estimates – Higher numbers indicate inefficiency

- Loop joins vs Hash joins – Hash joins are faster for large datasets

- Nested loops – Can be expensive with large result sets

Step 3: Common Bottlenecks

| Problem | Cause | Solution |

|---|---|---|

| High I/O reading millions of rows | No index on WHERE clause columns | Add index on order_date |

| Nested loop join taking 50%+ of time | Joining large tables without index | Add index on join columns |

| Multiple table scans | No indexes at all | Analyze and add strategic indexes |

| Network latency | Retrieving too many columns | Use column projection (SELECT specific columns) |

Step 4: Optimized Query

sql

-- Original problematic query

SELECT o.order_id, o.order_date, p.product_name, inv.quantity

FROM orders o

JOIN order_items oi ON o.order_id = oi.order_id

JOIN products p ON oi.product_id = p.product_id

JOIN inventory inv ON p.product_id = inv.product_id

WHERE o.order_date >= '2024-01-01';

-- Optimized version with indexes

-- First, create strategic indexes:

CREATE INDEX idx_orders_date ON orders(order_date);

CREATE INDEX idx_order_items_order ON order_items(order_id);

CREATE INDEX idx_order_items_product ON order_items(product_id);

CREATE INDEX idx_products_id ON products(product_id);

CREATE INDEX idx_inventory_product ON inventory(product_id);

-- Then run the same query (execution plan will now show Index Seek instead of Scan)

SELECT o.order_id, o.order_date, p.product_name, inv.quantity

FROM orders o

JOIN order_items oi ON o.order_id = oi.order_id

JOIN products p ON oi.product_id = p.product_id

JOIN inventory inv ON p.product_id = inv.product_id

WHERE o.order_date >= '2024-01-01';Expected Results:

- Query time: 45 seconds → 2-3 seconds (93% improvement!)

- Logical reads: 500,000+ → 50,000 (90% reduction!)

Key Takeaway: Always analyze the execution plan before optimizing. It reveals exactly where the database is spending time.

Question 2: How to Use Indexes Effectively Without Slowing Down Inserts?

Question

You have a table that receives 10,000 INSERT operations per hour. You need to optimize SELECT queries that take 30 seconds, but adding indexes slows down inserts by 50%. How would you balance read and write performance?

Detailed Answer

The Challenge: Indexes improve SELECT performance but slow down INSERT, UPDATE, and DELETE operations because the database must update every index when data changes.

Solution: Strategic Indexing Approach

Step 1: Understand Index Trade-offs

| Operation | Without Index | With Index |

|---|---|---|

| SELECT (WHERE clause) | Table Scan (Slow) | Index Seek (Fast) |

| INSERT | Add 1 row | Add 1 row + update index |

| UPDATE | Update row | Update row + update index |

| DELETE | Delete row | Delete row + remove from index |

Step 2: Choose the Right Type of Index

sql

-- CLUSTERED INDEX (most impactful)

-- Only 1 per table, reorganizes entire table data

CREATE CLUSTERED INDEX idx_orders_pk

ON orders(order_id);

-- NON-CLUSTERED INDEX (flexible)

-- Multiple per table, separate structure

CREATE NONCLUSTERED INDEX idx_orders_date

ON orders(order_date)

INCLUDE (customer_id, total_amount); -- INCLUDE columns you frequently SELECT

-- COMPOSITE INDEX (combines multiple columns)

CREATE NONCLUSTERED INDEX idx_orders_composite

ON orders(order_date, customer_id)

WHERE order_status = 'completed'; -- Filtered index only indexes relevant rowsStep 3: Use Filtered Indexes to Reduce Index Size

sql

-- BAD: Index on all 10 million rows

CREATE INDEX idx_orders_date ON orders(order_date);

-- GOOD: Index only on active orders (2 million rows)

CREATE INDEX idx_orders_date_active

ON orders(order_date)

WHERE order_status IN ('pending', 'in_transit');

-- Benefit: Smaller index = faster inserts, faster queriesStep 4: Batch Inserts to Reduce Index Overhead

sql

-- BAD: Individual inserts (slow with indexes)

INSERT INTO orders VALUES (...);

INSERT INTO orders VALUES (...);

INSERT INTO orders VALUES (...);

-- GOOD: Batch insert (much faster)

INSERT INTO orders (order_id, customer_id, order_date, total_amount)

VALUES

(1001, 101, '2024-01-15', 500),

(1002, 102, '2024-01-15', 750),

(1003, 103, '2024-01-15', 1200),

(1004, 104, '2024-01-15', 350);Step 5: Monitor and Maintain Indexes

sql

-- Find fragmented indexes (become less efficient over time)

SELECT

OBJECT_NAME(ps.object_id) AS TableName,

i.name AS IndexName,

ps.avg_fragmentation_in_percent AS Fragmentation

FROM sys.dm_db_index_physical_stats(DB_ID(), NULL, NULL, NULL, 'LIMITED') ps

JOIN sys.indexes i ON ps.object_id = i.object_id AND ps.index_id = i.index_id

WHERE ps.avg_fragmentation_in_percent > 10;

-- Rebuild highly fragmented indexes (> 30%)

ALTER INDEX idx_orders_date ON orders REBUILD;

-- Reorganize moderately fragmented indexes (10-30%)

ALTER INDEX idx_orders_date ON orders REORGANIZE;Real-World Example: 10,000 Inserts/Hour

sql

-- BALANCED APPROACH:

-- 1. Clustered index on primary key (almost always needed)

CREATE CLUSTERED INDEX PK_orders ON orders(order_id);

-- 2. Few critical non-clustered indexes for frequent queries

CREATE NONCLUSTERED INDEX idx_orders_date ON orders(order_date);

CREATE NONCLUSTERED INDEX idx_orders_customer ON orders(customer_id);

-- 3. Use filtered indexes for hot queries

CREATE NONCLUSTERED INDEX idx_pending_orders

ON orders(order_date)

WHERE order_status = 'pending';

-- 4. Batch inserts (application level)

-- Instead of 10,000 individual inserts, do 100 batches of 100

BEGIN TRANSACTION;

INSERT INTO orders (...) VALUES (...), (...), ..., (...); -- 100 rows

COMMIT;

-- Repeat 100 times per hourExpected Performance:

- SELECT query time: 30 seconds → 1-2 seconds ✅

- INSERT performance impact: 50% slowdown → 5-10% slowdown ✅

Key Takeaway: Use filtered indexes and batch inserts to balance read and write performance. Not every column needs an index.

Question 3: How to Optimize Joins – When to Use Different Join Types?

Question

You have a query joining 5 tables with 10 million+ rows each. The query takes 2 minutes. How would you optimize it by choosing the right join types and order?

Detailed Answer

Join Types and Their Performance Characteristics:

sql

-- 1. INNER JOIN (fastest, excludes unmatched rows)

SELECT o.order_id, c.customer_name

FROM orders o

INNER JOIN customers c ON o.customer_id = c.customer_id;

-- Use when: You only need matching records

-- 2. LEFT JOIN (slower, includes unmatched rows from left table)

SELECT o.order_id, c.customer_name

FROM orders o

LEFT JOIN customers c ON o.customer_id = c.customer_id;

-- Use when: You need all orders even if customer is null

-- 3. CROSS JOIN (very slow, Cartesian product)

SELECT * FROM orders CROSS JOIN customers;

-- Use when: You need all combinations (rarely!)

-- 4. HASH JOIN vs LOOP JOIN vs MERGE JOIN

-- Database chooses automatically, but you can guide itStep 1: Identify the Optimal Join Order

sql

-- SLOW: Joining 5 tables inefficiently

-- Starts with largest table (orders 10M rows)

SELECT

o.order_id,

c.customer_name,

p.product_name,

s.store_name,

inv.quantity

FROM orders o

JOIN customers c ON o.customer_id = c.customer_id

JOIN order_items oi ON o.order_id = oi.order_id

JOIN products p ON oi.product_id = p.product_id

JOIN stores s ON o.store_id = s.store_id

JOIN inventory inv ON p.product_id = inv.product_id

WHERE o.order_date >= '2024-01-01'

AND s.country = 'USA'

AND p.category = 'Electronics';

-- OPTIMIZED: Use smaller tables first

-- Join strategy: Filter first, then join

WITH filtered_orders AS (

SELECT o.order_id, o.customer_id, o.store_id

FROM orders o

WHERE o.order_date >= '2024-01-01'

),

filtered_stores AS (

SELECT store_id, store_name

FROM stores

WHERE country = 'USA'

),

filtered_products AS (

SELECT product_id, product_name, category

FROM products

WHERE category = 'Electronics'

)

SELECT

fo.order_id,

c.customer_name,

fp.product_name,

fs.store_name,

inv.quantity

FROM filtered_orders fo

JOIN customers c ON fo.customer_id = c.customer_id

JOIN filtered_stores fs ON fo.store_id = fs.store_id

JOIN order_items oi ON fo.order_id = oi.order_id

JOIN filtered_products fp ON oi.product_id = fp.product_id

JOIN inventory inv ON fp.product_id = inv.product_id;Step 2: Reduce Data Early with WHERE Clauses

sql

-- SLOW: Joins all rows, then filters

-- 10M + 10M + 10M + 10M + 10M = 50M row joins before filtering

SELECT *

FROM orders o

JOIN customers c ON o.customer_id = c.customer_id

JOIN products p ON ...

JOIN stores s ON ...

JOIN inventory inv ON ...

WHERE o.order_date >= '2024-01-01'

AND s.country = 'USA'

AND p.category = 'Electronics';

-- FAST: Filter before joining

-- Apply WHERE on base tables first

SELECT *

FROM orders o

WHERE o.order_date >= '2024-01-01'

JOIN customers c ON o.customer_id = c.customer_id

JOIN (SELECT * FROM products WHERE category = 'Electronics') p ON ...

JOIN (SELECT * FROM stores WHERE country = 'USA') s ON ...

JOIN inventory inv ON ...;Step 3: Ensure Join Columns Are Indexed

sql

-- CRITICAL: Ensure join columns have indexes

CREATE NONCLUSTERED INDEX idx_orders_customer_id ON orders(customer_id);

CREATE NONCLUSTERED INDEX idx_orders_store_id ON orders(store_id);

CREATE NONCLUSTERED INDEX idx_order_items_order_id ON order_items(order_id);

CREATE NONCLUSTERED INDEX idx_order_items_product_id ON order_items(product_id);

CREATE NONCLUSTERED INDEX idx_inventory_product_id ON inventory(product_id);

CREATE NONCLUSTERED INDEX idx_customers_id ON customers(customer_id);

CREATE NONCLUSTERED INDEX idx_products_id ON products(product_id);

CREATE NONCLUSTERED INDEX idx_stores_id ON stores(store_id);

-- Without these indexes, the database does full table scans for each joinPerformance Impact:

- Original query: 2 minutes (120 seconds)

- Optimized query: 3-5 seconds

- Improvement: 96% faster! ✅

Key Takeaway: The join order matters! Start with smaller, filtered tables and ensure all join columns are indexed.

Question 4: How to Optimize Subqueries and CTEs?

Question

You have a query with multiple nested subqueries that calculates top customers, their purchases, and refunds. The query takes 45 seconds. How would you optimize it using CTEs and materialization?

Detailed Answer

Subqueries vs CTEs vs Temporary Tables:

sql

-- 1. SUBQUERY (runs multiple times, slow)

SELECT

customer_id,

customer_name,

(SELECT COUNT(*) FROM orders o WHERE o.customer_id = c.customer_id) as order_count,

(SELECT SUM(total_amount) FROM orders o WHERE o.customer_id = c.customer_id) as total_spent

FROM customers c

WHERE customer_id IN (

SELECT customer_id

FROM orders

GROUP BY customer_id

HAVING COUNT(*) > 10

);

-- Problem: Subqueries in SELECT clause run for EVERY row (slow!)

-- 2. CTE (Common Table Expression - reads data once, faster)

WITH top_customers AS (

SELECT

customer_id,

COUNT(*) as order_count,

SUM(total_amount) as total_spent

FROM orders

GROUP BY customer_id

HAVING COUNT(*) > 10

)

SELECT

c.customer_id,

c.customer_name,

tc.order_count,

tc.total_spent

FROM customers c

JOIN top_customers tc ON c.customer_id = tc.customer_id;

-- Better! CTE reads data once, then joins

-- 3. TEMPORARY TABLE (physical storage, even faster for complex operations)

CREATE TEMP TABLE top_customers_temp AS

SELECT

customer_id,

COUNT(*) as order_count,

SUM(total_amount) as total_spent

FROM orders

GROUP BY customer_id

HAVING COUNT(*) > 10;

SELECT

c.customer_id,

c.customer_name,

tc.order_count,

tc.total_spent

FROM customers c

JOIN top_customers_temp tc ON c.customer_id = tc.customer_id;

DROP TABLE top_customers_temp;Step 1: Convert Nested Subqueries to CTEs

sql

-- SLOW: Nested subqueries

SELECT

o.order_id,

o.customer_id,

(SELECT AVG(total_amount) FROM orders

WHERE customer_id = o.customer_id) as customer_avg_order_value,

(SELECT COUNT(*) FROM refunds r

WHERE r.order_id = o.order_id) as refund_count,

(SELECT SUM(amount) FROM refunds r

WHERE r.order_id = o.order_id) as refund_amount

FROM orders o

WHERE o.order_date >= '2024-01-01';

-- FAST: Use CTEs

WITH customer_metrics AS (

SELECT

customer_id,

AVG(total_amount) as customer_avg_order_value

FROM orders

GROUP BY customer_id

),

refund_metrics AS (

SELECT

order_id,

COUNT(*) as refund_count,

SUM(amount) as refund_amount

FROM refunds

GROUP BY order_id

)

SELECT

o.order_id,

o.customer_id,

cm.customer_avg_order_value,

COALESCE(rm.refund_count, 0) as refund_count,

COALESCE(rm.refund_amount, 0) as refund_amount

FROM orders o

JOIN customer_metrics cm ON o.customer_id = cm.customer_id

LEFT JOIN refund_metrics rm ON o.order_id = rm.order_id

WHERE o.order_date >= '2024-01-01';Step 2: Use Multiple CTEs Instead of Nested Subqueries

sql

-- Clear, maintainable, and optimized

WITH

orders_filtered AS (

SELECT order_id, customer_id, total_amount, order_date

FROM orders

WHERE order_date >= '2024-01-01'

),

customer_stats AS (

SELECT

customer_id,

COUNT(*) as lifetime_orders,

AVG(total_amount) as avg_order_value,

MAX(order_date) as last_order_date

FROM orders

GROUP BY customer_id

),

refund_summary AS (

SELECT

order_id,

COUNT(*) as refund_count,

SUM(amount) as total_refunded

FROM refunds

GROUP BY order_id

)

SELECT

of.order_id,

of.customer_id,

cs.lifetime_orders,

cs.avg_order_value,

cs.last_order_date,

COALESCE(rs.refund_count, 0) as refunds,

COALESCE(rs.total_refunded, 0) as refund_amount

FROM orders_filtered of

JOIN customer_stats cs ON of.customer_id = cs.customer_id

LEFT JOIN refund_summary rs ON of.order_id = rs.order_id;Performance Comparison:

- Nested subqueries: 45 seconds

- CTEs: 3-5 seconds

- Improvement: 90% faster! ✅

Key Takeaway: CTEs are more readable and performant than nested subqueries. Always use CTEs for complex logic.

Question 5: How to Optimize GROUP BY and Aggregation Queries?

Question

Your daily sales report query that groups 100 million rows of transaction data takes 3 minutes to run. What techniques would you use to optimize this aggregation query?

Detailed Answer

Challenge: GROUP BY operations on large datasets require:

- Sorting all rows

- Aggregating by groups

- This can be very resource-intensive

Solution: Multi-Layered Optimization

Step 1: Ensure Columns are Indexed

sql

-- SLOW: No indexes on GROUP BY columns

SELECT

DATE(transaction_date) as date,

product_category,

payment_method,

COUNT(*) as transaction_count,

SUM(amount) as total_sales,

AVG(amount) as avg_transaction

FROM transactions

WHERE transaction_date >= '2024-01-01'

GROUP BY DATE(transaction_date), product_category, payment_method;

-- FAST: Create strategic indexes

CREATE NONCLUSTERED INDEX idx_transactions_date_category

ON transactions(transaction_date, product_category, payment_method)

INCLUDE (amount);

-- Now same query runs much faster

SELECT

DATE(transaction_date) as date,

product_category,

payment_method,

COUNT(*) as transaction_count,

SUM(amount) as total_sales,

AVG(amount) as avg_transaction

FROM transactions

WHERE transaction_date >= '2024-01-01'

GROUP BY DATE(transaction_date), product_category, payment_method;Step 2: Filter Data Early

sql

-- SLOW: Aggregate all data, then filter

SELECT

DATE(transaction_date) as date,

product_category,

COUNT(*) as count

FROM transactions

GROUP BY DATE(transaction_date), product_category

HAVING COUNT(*) > 1000;

-- This processes ALL 100M rows before filtering

-- FAST: Filter WHERE, then aggregate

SELECT

DATE(transaction_date) as date,

product_category,

COUNT(*) as count

FROM transactions

WHERE transaction_date >= '2024-01-01' -- Filters to 10M rows

GROUP BY DATE(transaction_date), product_category

HAVING COUNT(*) > 1000;

-- Now processes only 10M rowsStep 3: Pre-aggregate or Materialize Results

sql

-- For frequently run reports, pre-calculate and store results

-- 1. Create a summary table

CREATE TABLE daily_sales_summary (

date DATE,

product_category VARCHAR(100),

payment_method VARCHAR(100),

transaction_count INT,

total_sales DECIMAL(15,2),

avg_transaction DECIMAL(10,2),

PRIMARY KEY (date, product_category, payment_method)

);

-- 2. Populate it once daily (off-peak hours)

INSERT INTO daily_sales_summary

SELECT

DATE(transaction_date),

product_category,

payment_method,

COUNT(*) as transaction_count,

SUM(amount) as total_sales,

AVG(amount) as avg_transaction

FROM transactions

WHERE DATE(transaction_date) = CURRENT_DATE - 1

GROUP BY DATE(transaction_date), product_category, payment_method;

-- 3. Query the summary instead (instant!)

SELECT *

FROM daily_sales_summary

WHERE date >= '2024-01-01'

ORDER BY date DESC;

-- Result: Query time 3 minutes → < 100ms ✅Step 4: Use Approximate Aggregations for Large Datasets

sql

-- When exact numbers aren't critical, use APPROXIMATE COUNT DISTINCT

-- (Available in SQL Server, PostgreSQL, etc.)

-- SLOW: Exact count (uses temp storage)

SELECT

COUNT(DISTINCT customer_id) as unique_customers

FROM transactions

WHERE transaction_date >= '2024-01-01';

-- FAST: Approximate count (uses HyperLogLog algorithm)

SELECT

APPROX_COUNT_DISTINCT(customer_id) as approx_unique_customers

FROM transactions

WHERE transaction_date >= '2024-01-01';

-- Tradeoff: May be off by 1-2%, but 10x fasterStep 5: Optimize GROUP BY Order

sql

-- GROUP BY order matters! Put low-cardinality columns first

-- (columns with fewer unique values)

-- SLOW: High-cardinality column first

SELECT

transaction_id, -- 100M unique values (HIGH cardinality)

DATE(transaction_date),

COUNT(*) as count

FROM transactions

GROUP BY transaction_id, DATE(transaction_date);

-- FAST: Low-cardinality column first

SELECT

DATE(transaction_date), -- ~1000 unique values (LOW cardinality)

payment_method, -- ~10 unique values (LOWEST cardinality)

COUNT(*) as count

FROM transactions

GROUP BY DATE(transaction_date), payment_method;Real-World Optimization Summary:

| Technique | Impact | Effort |

|---|---|---|

| Add indexes | 50-70% faster | Low |

| Filter WHERE early | 30-50% faster | Low |

| Materialize results | 95%+ faster | Medium |

| Approximate aggregations | 80-90% faster | Medium |

| Optimize GROUP BY order | 10-20% faster | Low |

Final Result:

- Original query: 3 minutes (180 seconds)

- With all optimizations: 1-2 seconds

- Improvement: 99% faster! ✅

Key Takeaway: For large aggregation queries, materialize results into summary tables instead of recalculating from raw data every time.

Data Modeling Fundamentals

Question 1: What is Normalization vs Denormalization? When to Use Each?

Question

You’re designing a database for an e-commerce platform. Should you normalize the database to minimize redundancy, or denormalize for query performance? What are the tradeoffs?

Detailed Answer

Normalization: Organize Data to Reduce Redundancy

Normalization means breaking data into multiple tables to eliminate duplication.

sql

-- NOT NORMALIZED (Denormalized) - Customer info repeated in every order

CREATE TABLE orders_denormalized (

order_id INT,

customer_id INT,

customer_name VARCHAR(100), -- ❌ Repeated for each order

customer_email VARCHAR(100), -- ❌ Repeated for each order

customer_phone VARCHAR(20), -- ❌ Repeated for each order

customer_address VARCHAR(255), -- ❌ Repeated for each order

order_date DATE,

total_amount DECIMAL(10,2)

);

-- Problem: If customer email changes, must update ALL their orders

-- NORMALIZED (3rd Normal Form) - Data split across tables

CREATE TABLE customers (

customer_id INT PRIMARY KEY,

customer_name VARCHAR(100),

customer_email VARCHAR(100),

customer_phone VARCHAR(20),

customer_address VARCHAR(255)

);

CREATE TABLE orders (

order_id INT PRIMARY KEY,

customer_id INT,

order_date DATE,

total_amount DECIMAL(10,2),

FOREIGN KEY (customer_id) REFERENCES customers(customer_id)

);

-- Benefit: Update customer email once, reflects in all queriesNormalization Levels (0-3NF):

| Level | Description | Example |

|---|---|---|

| Unnormalized | Data in one table, duplicates everywhere | All customer info in every order row |

| 1NF (First) | Remove repeating groups | Product list is separate from order |

| 2NF (Second) | Remove partial dependencies | Order details in separate table |

| 3NF (Third) | Remove transitive dependencies | Customer phone in customer table, not order |

Denormalization: Optimize for Query Performance

Denormalization means keeping some redundant data to avoid joins.

sql

-- DENORMALIZED for speed

SELECT order_id, customer_name, total_amount

FROM orders_denormalized

WHERE order_date >= '2024-01-01';

-- ✅ No joins needed, very fast!

-- NORMALIZED (requires join)

SELECT o.order_id, c.customer_name, o.total_amount

FROM orders o

JOIN customers c ON o.customer_id = c.customer_id

WHERE o.order_date >= '2024-01-01';

-- ❌ Requires join, slowerDecision Framework: Normalize vs Denormalize?

Use NORMALIZATION (3NF) When:

- ✅ Data is frequently written (INSERT, UPDATE, DELETE)

- ✅ Consistency is critical

- ✅ Storage space is limited

- ✅ Database is small to medium size

- ✅ Real-time accuracy is important

Use DENORMALIZATION When:

- ✅ Data is frequently read (SELECT)

- ✅ Write operations are rare

- ✅ Query performance is critical

- ✅ You have plenty of storage

- ✅ Analytics or reporting database

- ✅ You have a Data Warehouse or Data Lake

Practical E-Commerce Example:

sql

-- OPERATIONAL DATABASE (Normalized - for transactions)

CREATE TABLE customers (

customer_id INT PRIMARY KEY,

name VARCHAR(100),

email VARCHAR(100),

phone VARCHAR(20)

);

CREATE TABLE orders (

order_id INT PRIMARY KEY,

customer_id INT,

order_date DATE,

FOREIGN KEY (customer_id) REFERENCES customers(customer_id)

);

CREATE TABLE order_items (

order_item_id INT PRIMARY KEY,

order_id INT,

product_id INT,

quantity INT,

FOREIGN KEY (order_id) REFERENCES orders(order_id)

);

-- ANALYTICS DATABASE (Denormalized - for reporting)

CREATE TABLE order_summary (

order_id INT,

customer_id INT,

customer_name VARCHAR(100), -- Denormalized from customers

customer_email VARCHAR(100), -- Denormalized from customers

order_date DATE,

total_items INT, -- Pre-calculated from order_items

total_amount DECIMAL(10,2), -- Pre-calculated

PRIMARY KEY (order_id)

);Key Takeaway: Use normalized databases for operational systems (OLTP) and denormalized databases for analytics (OLAP).

Question 2: How to Design an Effective Database Schema Using Star Schema and Snowflake Schema?

Question

You’re building a data warehouse for analytics that needs to support millions of queries on sales, customers, products, and time dimensions. Should you use a Star Schema or Snowflake Schema? What are the differences and when to use each?

Detailed Answer

Star Schema: Simple and Fast

Star schema has one fact table (center) and multiple dimension tables (surrounding it).

sql

-- FACT TABLE: Contains metrics and foreign keys to dimensions

CREATE TABLE fact_sales (

sale_id INT PRIMARY KEY,

time_id INT,

customer_id INT,

product_id INT,

store_id INT,

quantity INT,

amount DECIMAL(10,2),

discount DECIMAL(5,2),

FOREIGN KEY (time_id) REFERENCES dim_time(time_id),

FOREIGN KEY (customer_id) REFERENCES dim_customer(customer_id),

FOREIGN KEY (product_id) REFERENCES dim_product(product_id),

FOREIGN KEY (store_id) REFERENCES dim_store(store_id)

);

-- DIMENSION TABLES: Descriptive attributes (denormalized)

CREATE TABLE dim_time (

time_id INT PRIMARY KEY,

date DATE,

year INT,

month INT,

day INT,

quarter INT,

day_of_week VARCHAR(10),

is_weekend BOOLEAN,

holiday_name VARCHAR(50)

);

CREATE TABLE dim_customer (

customer_id INT PRIMARY KEY,

name VARCHAR(100),

email VARCHAR(100),

city VARCHAR(50),

state VARCHAR(50),

country VARCHAR(50),

customer_segment VARCHAR(50), -- Denormalized (could be separate table)

lifetime_value DECIMAL(12,2) -- Denormalized (calculated once)

);

CREATE TABLE dim_product (

product_id INT PRIMARY KEY,

product_name VARCHAR(100),

category VARCHAR(50),

subcategory VARCHAR(50),

brand VARCHAR(50),

price DECIMAL(10,2),

supplier_id INT -- Denormalized for speed

);

CREATE TABLE dim_store (

store_id INT PRIMARY KEY,

store_name VARCHAR(100),

city VARCHAR(50),

state VARCHAR(50),

country VARCHAR(50),

region VARCHAR(50)

);

-- ANALYTICAL QUERY (Simple!)

SELECT

t.year,

t.month,

c.country,

p.category,

COUNT(*) as transaction_count,

SUM(fs.amount) as total_sales,

AVG(fs.amount) as avg_sale

FROM fact_sales fs

JOIN dim_time t ON fs.time_id = t.time_id

JOIN dim_customer c ON fs.customer_id = c.customer_id

JOIN dim_product p ON fs.product_id = p.product_id

WHERE t.year = 2024

GROUP BY t.year, t.month, c.country, p.category;

-- Result: Simple joins, fast queries!Snowflake Schema: Normalized Dimensions

Snowflake extends star schema by normalizing dimension tables.

sql

-- SNOWFLAKE: Same fact table, but dimensions are normalized

CREATE TABLE dim_product (

product_id INT PRIMARY KEY,

product_name VARCHAR(100),

category_id INT,

brand_id INT,

price DECIMAL(10,2),

FOREIGN KEY (category_id) REFERENCES dim_category(category_id),

FOREIGN KEY (brand_id) REFERENCES dim_brand(brand_id)

);

CREATE TABLE dim_category (

category_id INT PRIMARY KEY,

category_name VARCHAR(50),

department VARCHAR(50)

);

CREATE TABLE dim_brand (

brand_id INT PRIMARY KEY,

brand_name VARCHAR(50),

country VARCHAR(50)

);

-- SNOWFLAKE QUERY (Requires more joins)

SELECT

t.year,

t.month,

c.country,

cat.category_name,

b.brand_name,

COUNT(*) as transaction_count,

SUM(fs.amount) as total_sales

FROM fact_sales fs

JOIN dim_time t ON fs.time_id = t.time_id

JOIN dim_product p ON fs.product_id = p.product_id

JOIN dim_category cat ON p.category_id = cat.category_id

JOIN dim_brand b ON p.brand_id = b.brand_id

JOIN dim_customer c ON fs.customer_id = c.customer_id

WHERE t.year = 2024

GROUP BY t.year, t.month, c.country, cat.category_name, b.brand_name;

-- Result: More joins, but saves storage spaceStar vs Snowflake Comparison:

| Factor | Star Schema | Snowflake Schema |

|---|---|---|

| Complexity | Simple (4 dimensions) | Complex (8+ dimensions) |

| Query Performance | Fast (fewer joins) | Slower (more joins) |

| Storage | 10GB for data | 7GB for data |

| Update Ease | Update once per dimension | Update in multiple tables |

| Query Speed | ⭐⭐⭐⭐⭐ Very Fast | ⭐⭐⭐ Moderate |

| Best For | OLAP, Analytics, BI | Large enterprises, strict normalization |

When to Use Star Schema:

- ✅ Analytics and BI reporting (most common)

- ✅ When query speed is critical

- ✅ Smaller to medium data warehouses

- ✅ Real-time dashboards

When to Use Snowflake Schema:

- ✅ Very large data warehouses (100TB+)

- ✅ When storage space is expensive

- ✅ When data integrity is stricter priority than speed

- ✅ Enterprise data warehouses

Practical Recommendation for E-Commerce:

sql

-- START WITH STAR SCHEMA (simpler, faster)

-- Fact table with 5-10 dimensions

-- As warehouse grows and queries become complex,

-- Consider slowly evolving to Snowflake for specific dimensions

-- Example: Keep product dimension denormalized (star),

-- but normalize location data (snowflake) since many tables reference itKey Takeaway: Star schema is faster for analytics. Use it by default unless you have specific reasons for normalization (data integrity needs, massive scale, etc.).

Frequently Asked Questions

Q: What’s the difference between indexing and materialization?

Indexing: Creates a lookup structure on specific columns to speed up WHERE clauses and JOINs.

- Index on

customer_idmakes filtering by customer fast - Useful for: WHERE, JOIN, ORDER BY conditions

Materialization: Pre-calculates and stores results of expensive queries.

- Create a summary table with daily sales by category

- Store the result physically

- Useful for: Expensive aggregations, frequently used results

Q: How often should I reorganize indexes?

For high-write tables: Monthly or quarterly For low-write tables: Quarterly or annually Monitor fragmentation using sys.dm_db_index_physical_stats and reorganize when > 10%

Q: What’s the most impactful SQL optimization technique?

Adding the right indexes typically gives the biggest performance gain (50-70% faster), followed by filtering data early in the query (WHERE clauses before JOINs).

Q: Can denormalization cause problems?

Yes! Risks include:

- Data inconsistency (customer info stored in two places, one gets updated)

- Higher storage costs

- Complex INSERT/UPDATE/DELETE logic

- Use carefully in analytical databases only

Q: What’s the ideal number of indexes per table?

There’s no magic number, but:

- Too few: Queries are slow

- Too many: Inserts/updates become slow

- Sweet spot: 1-5 indexes per table on high-write tables

- Analytical tables can have 10+ indexes since they’re rarely written to

Conclusion

SQL query optimization and data modeling are essential skills for building high-performance database systems. The key takeaways are:

- Always start with execution plans – Understand where the database is spending time

- Index strategically – Focus on WHERE clause columns and JOIN conditions

- Filter early, join later – Apply WHERE filters before JOINs

- Use CTEs over nested subqueries – More readable and performant

- Normalize transactional databases, denormalize analytical databases – Different systems have different needs

- Use Star Schema for analytics – Simple, fast, and effective

- Monitor and maintain – Fragmentation degrades performance over time

With these techniques, you can improve query performance by 90-95% without expensive hardware upgrades.