SQL Series – This series aim to make sure you are able to learn and understand at least 100 of the most asked concepts in SQL interviews.

Today is Day 4 of the SQL Series – SQL Transactions and Concurrency Interview Questions

Bookmark this page, and make sure to be consistent in it. Also, get a notebook and make notes of each question/concept put in here

We have created this series to make you practice and learn ~120 Most Asked SQL interview Questions

Day 1 – Basic SQL Questions + Most Asked SQL Interview Questions

Day 2 – JSON, Date and Time SQL Interview Questions

Day 3 – SQL Query Optimization and Data Modeling Interview Questions

Day 4 – SQL Transactions and Concurrency Interview Questions

Day 5 – SQL Theory + SQL Hard Interview Questions

Day 6 – Advanced SQL Aggregation + Advanced Joins

Day 7 – Error Handling & Debugging + Stored Procedures & CTE

Day 8 – Complex Data Handling

Day 9 – Data Modeling

Day 10 – SQL Most Asked Query Interview Questions

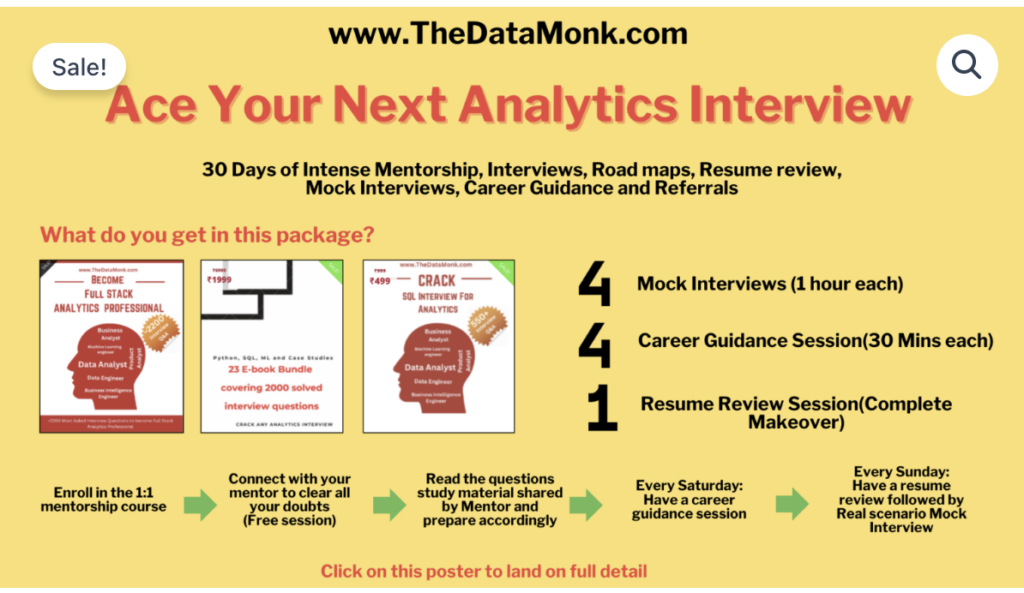

The Data Monk Best Seller Books and Services

1. Become a Full Stack Analytics Professional – 2200 Interview Questions covering 23 topics

2. Book mock interviews/Career Guidance Session on Top Mate to practice

And below is our master class

1 Month Complete 1:1 Mentorship along with 3 e-books, 4 mock interviews, 4 career guidance sessions, and a complete resume review

You can also go through our The Data Monk’s Youtube Channel with 160+ videos across all the top interview topics

Table of Contents:

SQL Transactions and Concurrency Control: 9 Interview Questions with Detailed Solutions

SQL transactions and concurrency control are fundamental concepts in database management that often appear in technical interviews for database engineers, backend developers, and data architects. Understanding how to handle multiple concurrent users, prevent data corruption, and manage database consistency is critical for building robust applications.

In this comprehensive guide, we’ll walk through 9 real-world interview questions with detailed explanations, code examples, and practical solutions that you can use to ace your next interview.

SQL Transactions: Core Concepts

A transaction is a sequence of SQL operations that are executed as a single unit of work. The ACID properties (Atomicity, Consistency, Isolation, Durability) ensure data integrity.

Question 1: What is a Database Transaction and Why Do We Need ACID Properties?

Interview Question:

“Explain what a database transaction is and explain each of the ACID properties. Why can’t we just update data without these constraints?”

Simple Answer:

A database transaction is a group of SQL statements that must all succeed or all fail together. Think of it like a bank transfer: you need to subtract money from one account AND add it to another. If only one happens, the data becomes inconsistent.

ACID Properties:

- Atomicity: All-or-nothing. Either the entire transaction completes, or nothing happens. No partial updates.

- Consistency: The database moves from one valid state to another valid state. Rules and constraints are always maintained.

- Isolation: Concurrent transactions don’t interfere with each other. One user’s changes don’t affect another user’s reads.

- Durability: Once committed, data stays in the database forever, even if there’s a server crash.

Code Example:

-- Example: Bank Transfer Transaction

BEGIN TRANSACTION;

UPDATE Accounts SET balance = balance - 100

WHERE account_id = 1;

UPDATE Accounts SET balance = balance + 100

WHERE account_id = 2;

COMMIT; -- Both updates happen or neither happensWhy ACID matters: Without ACID, you could lose money (atomicity), create negative balances (consistency), have users seeing partially updated data (isolation), or lose confirmed transfers after a crash (durability).

Question 2: What’s the Difference Between COMMIT and ROLLBACK? Provide a Real-World Example.

Interview Question:

“Walk me through how COMMIT and ROLLBACK work in a transaction. When would you use ROLLBACK instead of COMMIT?”

Simple Answer:

COMMIT saves all changes made in the transaction permanently to the database.

ROLLBACK undoes all changes made in the transaction, returning the database to its previous state.

Code Example with Error Handling:

-- Example: Order Processing with Rollback

BEGIN TRANSACTION;

INSERT INTO Orders (customer_id, order_date, total_amount)

VALUES (101, GETDATE(), 500);

INSERT INTO OrderItems (order_id, product_id, quantity, price)

VALUES (5001, 3, 2, 250);

-- Check if inventory is available

DECLARE @AvailableQty INT;

SELECT @AvailableQty = quantity FROM Inventory WHERE product_id = 3;

IF @AvailableQty < 2

BEGIN

ROLLBACK; -- Undo the order

PRINT 'Transaction rolled back - Insufficient inventory';

END

ELSE

BEGIN

UPDATE Inventory SET quantity = quantity - 2 WHERE product_id = 3;

COMMIT; -- Save everything

PRINT 'Order processed successfully';

ENDReal-world scenario: When processing an order, you insert the order header and items. If inventory check fails, you ROLLBACK to prevent a fake order from existing. If all checks pass, you COMMIT.

Question 3: Explain the Difference Between Implicit and Explicit Transactions. Which Should You Use and Why?

Interview Question:

“Some databases use implicit transactions by default while others require explicit BEGIN/COMMIT. What’s the difference and which is safer for critical operations?”

Simple Answer:

Implicit Transactions: Each SQL statement is automatically wrapped in a transaction. The database auto-commits after each statement.

Explicit Transactions: You explicitly declare the start with BEGIN TRANSACTION and end with COMMIT or ROLLBACK.

Code Comparison:

-- IMPLICIT TRANSACTION (Default in MySQL, SQLite)

UPDATE Users SET status = 'active' WHERE user_id = 5; -- Auto-commits immediately

DELETE FROM Sessions WHERE user_id = 5; -- If this fails, the UPDATE already happened

-- EXPLICIT TRANSACTION (Better for related operations)

BEGIN TRANSACTION;

UPDATE Users SET status = 'active' WHERE user_id = 5;

DELETE FROM Sessions WHERE user_id = 5;

COMMIT; -- Both happen together or neither happensWhich is better? Explicit transactions are safer for multi-step operations because:

- You control exactly when changes are committed

- Related operations stay grouped together

- Errors can trigger ROLLBACK to maintain consistency

- Better for critical operations like financial transactions

Isolation Levels and Concurrency Problems

When multiple users access the database simultaneously, isolation levels determine how their transactions interact and what consistency issues might occur.

Question 4: Explain the 4 Isolation Levels. What Problems Do They Solve/Create?

Interview Question:

“Walk me through READ UNCOMMITTED, READ COMMITTED, REPEATABLE READ, and SERIALIZABLE. For each, explain what problems they solve and what issues they allow.”

Simple Answer with Problem Scenarios:

READ UNCOMMITTED (Lowest Level – Most Problems):

Allows reading uncommitted data from other transactions. Can see “dirty reads” (incomplete data).

| Scenario: | User A transfers $100. User B sees the deducted balance before User A confirms. |

| Problem: | Dirty reads – reading incomplete/uncommitted data |

| Use Case: | Analytics reports where slight inaccuracy is acceptable |

SET TRANSACTION ISOLATION LEVEL READ UNCOMMITTED;

-- Highest performance, lowest safetyREAD COMMITTED (Default in Most Databases):

Only reads committed data. Prevents dirty reads but allows non-repeatable reads.

| Scenario: | User A queries product price ($50). Someone changes it to $60. User A queries again and gets $60. |

| Problem: | Non-repeatable reads – same query returns different data |

| Use Case: | Most web applications, e-commerce sites |

SET TRANSACTION ISOLATION LEVEL READ COMMITTED;

-- Good balance of performance and safetyREPEATABLE READ (MySQL Default):

Data read in a transaction stays the same for the entire transaction. Prevents non-repeatable reads but allows phantom reads.

| Scenario: | User A queries orders for customer 5 (gets 3 orders). New order is inserted. Same query returns 4 orders. |

| Problem: | Phantom reads – new rows appear between queries |

| Use Case: | Banking systems, financial reports |

SET TRANSACTION ISOLATION LEVEL REPEATABLE READ;

-- Prevents most consistency issuesSERIALIZABLE (Highest Level – Best Safety):

Transactions execute one after another. No dirty, non-repeatable, or phantom reads. Slowest performance.

| Use Case: | Mission-critical systems like stock exchanges, bank core systems |

| Trade-off: | Maximum safety, minimum performance |

SET TRANSACTION ISOLATION LEVEL SERIALIZABLE;

-- Slowest but safest - use only when data accuracy is criticalQuick Comparison Table:

| Level | Dirty Read | Non-Repeatable Read | Phantom Read | Performance |

|---|---|---|---|---|

| READ UNCOMMITTED | ✗ Possible | ✗ Possible | ✗ Possible | ⚡ Fastest |

| READ COMMITTED | ✓ Prevented | ✗ Possible | ✗ Possible | ⚡ Fast |

| REPEATABLE READ | ✓ Prevented | ✓ Prevented | ✗ Possible | 🐢 Slower |

| SERIALIZABLE | ✓ Prevented | ✓ Prevented | ✓ Prevented | 🐌 Slowest |

Question 5: What is a Dirty Read, Non-Repeatable Read, and Phantom Read? Provide Code Examples.

Interview Question:

“Explain dirty reads, non-repeatable reads, and phantom reads. Show me code that demonstrates each problem and explain why they occur.”

Dirty Read Example:

What is it? Reading data that hasn’t been committed yet.

-- SESSION 1 (Transaction NOT committed)

BEGIN TRANSACTION;

UPDATE Accounts SET balance = 900 WHERE account_id = 1;

-- Transaction still open, not committed yet

-- SESSION 2 (Reading uncommitted data)

SET TRANSACTION ISOLATION LEVEL READ UNCOMMITTED;

SELECT balance FROM Accounts WHERE account_id = 1;

-- Returns 900 (dirty/uncommitted data)

-- Back to SESSION 1

ROLLBACK; -- Oops! Changes undone

-- SESSION 2's data is now wrong - it read a balance that never actually happened!Non-Repeatable Read Example:

What is it? The same query returns different data within the same transaction.

-- SESSION 1

SET TRANSACTION ISOLATION LEVEL READ COMMITTED;

BEGIN TRANSACTION;

SELECT balance FROM Accounts WHERE account_id = 1;

-- Returns 1000

-- SESSION 2 (While SESSION 1 is still open)

UPDATE Accounts SET balance = 500 WHERE account_id = 1;

COMMIT;

-- Back to SESSION 1 (same transaction)

SELECT balance FROM Accounts WHERE account_id = 1;

-- Returns 500 - DIFFERENT from the first query!

-- This is a non-repeatable read

COMMIT;Phantom Read Example:

What is it? New rows appear in a query result between two identical queries in the same transaction.

-- SESSION 1

SET TRANSACTION ISOLATION LEVEL REPEATABLE READ;

BEGIN TRANSACTION;

SELECT COUNT(*) FROM Orders WHERE customer_id = 101;

-- Returns 5 orders

-- SESSION 2 (While SESSION 1 is still open)

INSERT INTO Orders (customer_id, order_date)

VALUES (101, GETDATE());

COMMIT;

-- Back to SESSION 1

SELECT COUNT(*) FROM Orders WHERE customer_id = 101;

-- Returns 6 orders - A NEW ROW APPEARED!

-- This is a phantom read

COMMIT;Question 6: How Would You Choose an Isolation Level for Your Application? Walk Through Your Decision Process.

Interview Question:

“If you’re designing a new banking application, a social media platform, and an analytics dashboard, which isolation levels would you choose for each and why?”

Decision Framework:

- Assess data sensitivity: How critical is correctness? Will errors cost money or reputation?

- Consider concurrency: How many concurrent users? Will higher isolation levels cause performance issues?

- Evaluate consistency requirements: Can stale data cause problems?

- Test performance: Measure the actual impact of each level

Real-World Application Examples:

Banking Application:

-- Banking: Use SERIALIZABLE

-- Why: Money is involved. Even one error costs the company.

-- Impact: High concurrency isn't as important as accuracy.

-- Typical users: Thousands, not millions

SET TRANSACTION ISOLATION LEVEL SERIALIZABLE;

BEGIN TRANSACTION;

UPDATE Accounts SET balance = balance - 100 WHERE account_id = 1;

UPDATE Accounts SET balance = balance + 100 WHERE account_id = 2;

COMMIT;Social Media Platform (Like Twitter/Instagram):

-- Social Media: Use READ COMMITTED or REPEATABLE READ

-- Why: Slight delays in seeing new posts are acceptable.

-- 100ms old data won't break anything.

-- Impact: Millions of concurrent users. Need performance.

SET TRANSACTION ISOLATION LEVEL READ COMMITTED;

BEGIN TRANSACTION;

INSERT INTO Posts (user_id, content) VALUES (5, 'Hello world!');

UPDATE Users SET post_count = post_count + 1 WHERE user_id = 5;

COMMIT;Analytics Dashboard:

-- Analytics: Use READ UNCOMMITTED

-- Why: Queries are read-only. 1% inaccuracy in reports is fine.

-- Impact: Extremely fast performance. Can analyze millions of rows.

SET TRANSACTION ISOLATION LEVEL READ UNCOMMITTED;

SELECT

DATE_TRUNC('day', created_at) as day,

COUNT(*) as total_orders,

SUM(amount) as revenue

FROM Orders

WHERE created_at >= CURRENT_DATE - 30

GROUP BY DATE_TRUNC('day', created_at);Deadlocks and Concurrency Conflicts

Deadlocks occur when two transactions are waiting for each other to release locks, creating a circular dependency. Understanding how to prevent and handle them is crucial.

Question 7: What is a Deadlock? How Would You Identify and Prevent One?

Interview Question:

“Explain what a deadlock is, show me code that causes one, and then tell me how you’d prevent it.”

What is a Deadlock?

A deadlock occurs when two transactions are waiting for each other to release locks in a circular chain:

Transaction A: Locks Account 1 → Waits for Account 2

Transaction B: Locks Account 2 → Waits for Account 1

Both are stuck forever!

Code That Causes a Deadlock:

-- SESSION 1: Transfer $100 from Account 1 to Account 2

BEGIN TRANSACTION;

UPDATE Accounts SET balance = balance - 100 WHERE account_id = 1;

-- Holds lock on Account 1

WAITFOR DELAY '00:00:05'; -- Simulate slow operation

UPDATE Accounts SET balance = balance + 100 WHERE account_id = 2;

-- Waits for lock on Account 2 (held by Session 2)

COMMIT;

-- SESSION 2: Transfer $50 from Account 2 to Account 1 (opposite order)

BEGIN TRANSACTION;

UPDATE Accounts SET balance = balance - 50 WHERE account_id = 2;

-- Holds lock on Account 2

WAITFOR DELAY '00:00:05'; -- Simulate slow operation

UPDATE Accounts SET balance = balance + 50 WHERE account_id = 1;

-- Waits for lock on Account 1 (held by Session 1)

COMMIT;

-- RESULT: DEADLOCK! Both transactions are waiting for each other.How to Prevent Deadlocks:

Strategy 1: Consistent Ordering (Best Solution)

Always access resources in the same order across all transactions.

-- SESSION 1: Always update lower account_id first

BEGIN TRANSACTION;

UPDATE Accounts SET balance = balance - 100 WHERE account_id = 1;

UPDATE Accounts SET balance = balance + 100 WHERE account_id = 2;

COMMIT;

-- SESSION 2: Also always update lower account_id first

BEGIN TRANSACTION;

UPDATE Accounts SET balance = balance - 50 WHERE account_id = 1;

UPDATE Accounts SET balance = balance + 50 WHERE account_id = 2;

COMMIT;

-- NO DEADLOCK! Both access accounts in order 1, then 2Strategy 2: Shorter Transactions

Keep transactions small and fast. Less time holding locks = less chance of deadlock.

-- BAD: Long transaction with calculations outside DB

BEGIN TRANSACTION;

UPDATE Account1...;

-- Do complex calculations here (5 seconds)

UPDATE Account2...;

COMMIT;

-- GOOD: Do calculations first, then lock

DECLARE @amount INT = CalculateAmount(...); -- No locks held

BEGIN TRANSACTION;

UPDATE Account1...;

UPDATE Account2...;

COMMIT; -- Transaction completes in millisecondsStrategy 3: Handle Deadlocks with Retry Logic

When a deadlock occurs, retry the transaction automatically.

-- SQL Server Example

DECLARE @MaxRetries INT = 3;

DECLARE @Retry INT = 0;

DECLARE @Success BIT = 0;

WHILE @Retry < @MaxRetries AND @Success = 0

BEGIN

BEGIN TRY

BEGIN TRANSACTION;

UPDATE Accounts SET balance = balance - 100 WHERE account_id = 1;

UPDATE Accounts SET balance = balance + 100 WHERE account_id = 2;

COMMIT;

SET @Success = 1; -- Success

END TRY

BEGIN CATCH

IF ERROR_NUMBER() = 1205 -- Deadlock error code

BEGIN

ROLLBACK;

SET @Retry = @Retry + 1;

WAITFOR DELAY '00:00:00.100'; -- Wait 100ms before retry

END

ELSE

BEGIN

THROW; -- Other error, don't retry

END

END CATCH

ENDQuestion 8: Explain Optimistic vs. Pessimistic Locking. When Would You Use Each?

Interview Question:

“There are two main locking strategies: optimistic and pessimistic. Explain how each works, show me the implementation, and tell me which you’d use for an inventory system vs. a blog platform.”

Pessimistic Locking: “Lock Everything”

Philosophy: Assume conflicts will happen. Lock data immediately when you read it.

Use Case: High-conflict scenarios (inventory, financial systems)

-- Inventory System: Lock the product while updating

BEGIN TRANSACTION;

SELECT @CurrentStock = quantity

FROM Products WITH (XLOCK) -- Exclusive lock - no one else can read/write

WHERE product_id = 5;

IF @CurrentStock >= 10

BEGIN

UPDATE Products

SET quantity = quantity - 10

WHERE product_id = 5;

INSERT INTO OrderItems (product_id, quantity) VALUES (5, 10);

COMMIT;

END

ELSE

BEGIN

ROLLBACK;

PRINT 'Out of stock';

ENDAdvantages: Prevents conflicts entirely, simple logic

Disadvantages: Locks cause contention, slower, can deadlock

Optimistic Locking: “Assume No Conflicts”

Philosophy: Don’t lock. Instead, check if data changed before committing.

Use Case: Low-conflict scenarios (blogs, social media comments)

-- Blog Posts: Use version column for optimistic locking

CREATE TABLE BlogPosts (

post_id INT PRIMARY KEY,

title VARCHAR(200),

content TEXT,

version INT DEFAULT 1 -- Version number increases with each update

);

-- User reads a post

SELECT @Title = title, @Content = content, @Version = version

FROM BlogPosts

WHERE post_id = 1;

-- User edits and tries to save

BEGIN TRANSACTION;

UPDATE BlogPosts

SET title = 'New Title',

content = 'New Content',

version = version + 1

WHERE post_id = 1

AND version = @Version; -- Only update if version hasn't changed

IF @@ROWCOUNT = 0

BEGIN

-- Someone else updated the post

ROLLBACK;

PRINT 'Post was modified by another user. Please refresh.';

END

ELSE

BEGIN

COMMIT;

PRINT 'Post updated successfully';

ENDAdvantages: No locks, high concurrency, fast

Disadvantages: Requires retry logic, can reject valid updates

Decision Matrix:

| Scenario | Locking Strategy | Why |

|---|---|---|

| Inventory Management | Pessimistic | Many users competing for same stock. Must prevent overselling. |

| Blog Comments | Optimistic | Few users edit same comment. Can retry if conflict. |

| Bank Transfers | Pessimistic | Critical data. Every conflict must be prevented. |

| Product Reviews | Optimistic | Different users editing different reviews. Rare conflicts. |

Question 9: Design a Solution for a High-Concurrency E-Commerce Checkout System. How Would You Handle Transactions?

Interview Question:

“Design the transaction handling for an e-commerce checkout where thousands of users are simultaneously buying limited inventory. Walk me through your approach from reading inventory to payment processing.”

Architecture Overview:

1. User views product (READ UNCOMMITTED - speed, no lock)

2. User clicks "Add to Cart" (no database transaction yet)

3. User proceeds to checkout (validate stock, short pessimistic lock)

4. Process payment (external system, not in DB transaction)

5. Confirm order (atomic transaction with inventory deduction)

6. Send confirmation email (after transaction commits)Implementation:

-- Step 1: User views product (no lock, stale data is OK)

SET TRANSACTION ISOLATION LEVEL READ UNCOMMITTED;

SELECT product_id, name, price, quantity

FROM Products

WHERE product_id = 123;

-- Step 2: Validate stock (quick pessimistic lock)

BEGIN TRANSACTION;

SELECT @AvailableStock = quantity

FROM Inventory WITH (XLOCK) -- Exclusive lock, short duration

WHERE product_id = 123;

IF @AvailableStock < @RequestedQuantity

BEGIN

ROLLBACK;

RETURN 'Out of stock';

END

COMMIT; -- Release lock immediately

-- Step 3: Process external payment (NOT in transaction)

DECLARE @PaymentSuccessful BIT = CallPaymentAPI(...);

-- Step 4: Confirm order (atomic transaction)

BEGIN TRANSACTION;

INSERT INTO Orders (customer_id, order_date, total)

VALUES (@CustomerID, GETDATE(), @Total);

SELECT @OrderID = SCOPE_IDENTITY();

INSERT INTO OrderItems (order_id, product_id, quantity, price)

VALUES (@OrderID, 123, @RequestedQuantity, @ProductPrice);

UPDATE Inventory

SET quantity = quantity - @RequestedQuantity,

last_updated = GETDATE()

WHERE product_id = 123;

-- Only commits if all succeeded

COMMIT;

-- Step 5: Send email (after transaction)

SendConfirmationEmail(@CustomerEmail, @OrderID);Why This Design Works:

- Speed: Views use READ UNCOMMITTED (no locks)

- Accuracy: Stock check uses quick exclusive lock

- Isolation: Payment is outside transaction (can fail safely)

- Consistency: Final order confirmation is atomic

- Safety: Email only sent after everything succeeds

Handling Edge Cases:

-- Race condition: What if stock drops between validation and order?

-- Solution: Check again during order confirmation

BEGIN TRANSACTION;

-- Re-validate stock

SELECT @FinalStock = quantity

FROM Inventory WITH (XLOCK)

WHERE product_id = 123;

IF @FinalStock < @RequestedQuantity

BEGIN

ROLLBACK;

RETURN 'Item sold out during checkout';

END

-- Now safe to proceed with order

INSERT INTO Orders...

UPDATE Inventory...

COMMIT;

-- Payment failed but order was created? Use a status flag:

CREATE TABLE Orders (

order_id INT,

customer_id INT,

status VARCHAR(20) DEFAULT 'PENDING', -- PENDING, PAID, FAILED

created_at DATETIME

);

-- If payment fails, mark order as FAILED instead of deleting

UPDATE Orders SET status = 'FAILED' WHERE order_id = 123;Key Takeaways for Interview Success

- ACID matters: Always explain why transactions are important for data integrity

- Isolation = trade-off: Higher isolation = more safety but slower. Know when to use each level.

- Deadlocks are common: Be prepared with prevention strategies (ordering, retry logic, shorter transactions)

- Locking strategy: Choose pessimistic for high-contention, optimistic for low-contention scenarios

- Real-world thinking: Interviewers like candidates who think about performance AND correctness together

- Show your process: Walk through your decision-making, don’t just give answers

Additional Resources

- SQL Server Documentation: Isolation Levels and Locking

- MySQL Documentation: InnoDB Transaction Model

- PostgreSQL Documentation: Transactions and Concurrency

- Practice: Set up a local database and deliberately create deadlocks to understand them better

Did this help? Share your thoughts in the comments below. Which isolation level do you use most in your applications?