SQL Series – This series aim to make sure you are able to learn and understand at least 100 of the most asked concepts in SQL interviews.

Today is Day 5 of the SQL Series – SQL Hard Interview Questions

Bookmark this page, and make sure to be consistent in it. Also, get a notebook and make notes of each question/concept put in here

We have created this series to make you practice and learn ~120 Most Asked SQL interview Questions

Day 1 – Basic SQL Questions + Most Asked SQL Interview Questions

Day 2 – JSON, Date and Time SQL Interview Questions

Day 3 – SQL Query Optimization and Data Modeling Interview Questions

Day 4 – Transactions and Concurrency

Day 5 – SQL Hard Interview Questions

Day 6 – Advanced SQL Aggregation + Advanced Joins

Day 7 – Error Handling & Debugging + Stored Procedures & CTE

Day 8 – Complex Data Handling

Day 9 – Data Modeling

Day 10 – SQL Most Asked Query Interview Questions

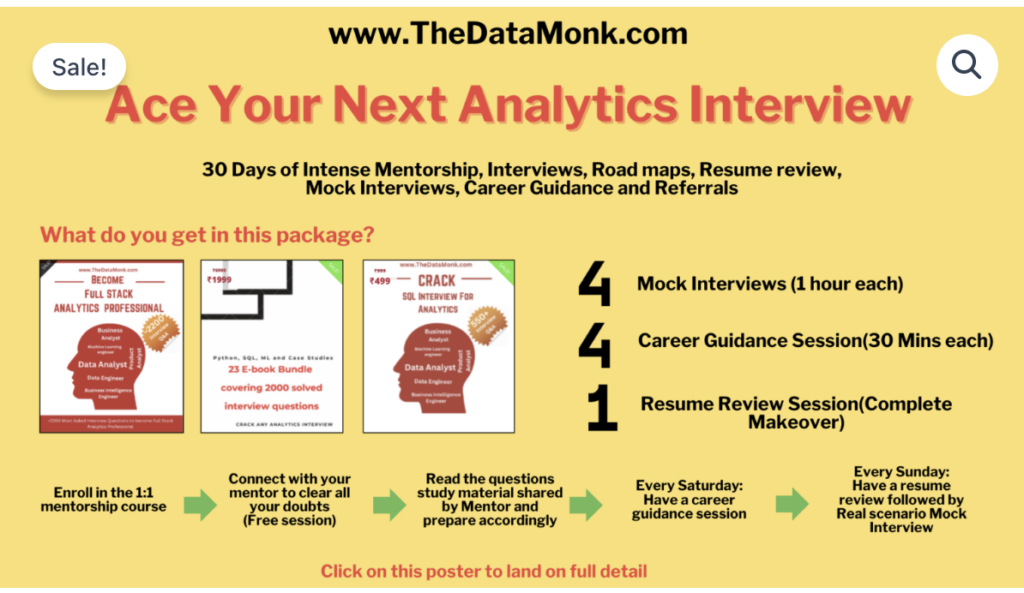

The Data Monk Best Seller Books and Services

1. Become a Full Stack Analytics Professional in 2026 – 2200 Interview Questions covering 23 topics

2. Book mock interviews/Career Guidance Session on Top Mate to practice

And below is our master class

1 Month Complete 1:1 Mentorship along with 3 e-books, 4 mock interviews, 4 career guidance sessions, and a complete resume review

You can also go through our The Data Monk’s Youtube Channel with 160+ videos across all the top interview topics

Question 1: Find the Second Highest Salary in Each Department Without Using Window Functions

Interview Question:

“Write a query to find the second highest salary in each department. You cannot use RANK(), ROW_NUMBER(), or any window functions. Explain your approach.”

The Interview Scenario:

This is a real senior-level question that tests:

- Can you solve complex problems without modern features?

- Do you understand self-joins and correlated subqueries?

- Can you handle edge cases (ties, departments with <1 salary)?

- What’s your thought process when explaining a solution?

Sample Data:

-- Table Structure

CREATE TABLE Employees (

employee_id INT PRIMARY KEY,

name VARCHAR(100),

department_id INT,

salary DECIMAL(10, 2)

);

-- Sample Data

INSERT INTO Employees VALUES

(1, 'John', 1, 60000),

(2, 'Alice', 1, 75000),

(3, 'Bob', 1, 70000),

(4, 'Charlie', 2, 80000),

(5, 'Diana', 2, 85000),

(6, 'Eve', 2, 82000),

(7, 'Frank', 3, 50000); -- Department 3 has only 1 employee

-- Expected Output:

-- department_id | second_highest_salary

-- 1 | 70000 (highest: 75000, second: 70000, third: 60000)

-- 2 | 83000 (highest: 85000, second: 83000, third: 82000)

-- 3 | NULL (only 1 salary in this department)

Solution 1: Using Self-Join with DISTINCT

SELECT

d1.department_id,

MAX(d1.salary) AS second_highest_salary

FROM Employees d1

WHERE d1.salary < (

-- Find max salary in the same department

SELECT MAX(salary)

FROM Employees d2

WHERE d2.department_id = d1.department_id

)

GROUP BY d1.department_id;

-- Explanation:

-- 1. Outer query finds all salaries LESS than the max in their department

-- 2. We take MAX of those salaries (second highest)

-- 3. GROUP BY ensures we get one result per department

-- 4. Naturally handles edge cases:

-- - If department has only 1 salary: subquery returns that salary,

-- no records satisfy the WHERE clause, no output = NULL

-- - If multiple employees have same salary: DISTINCT handles ties

Solution 2: Using Correlated Subquery (More Explicit)

SELECT DISTINCT

d1.department_id,

(SELECT MAX(salary)

FROM Employees d2

WHERE d2.department_id = d1.department_id

AND d2.salary < (

SELECT MAX(salary)

FROM Employees d3

WHERE d3.department_id = d1.department_id

)

) AS second_highest_salary

FROM Employees d1

WHERE (SELECT COUNT(DISTINCT salary) FROM Employees d4 WHERE d4.department_id = d1.department_id) >= 2;

-- Why this works:

-- - Explicitly finds salary less than max, then gets max of those

-- - WHERE clause filters to only departments with 2+ distinct salaries

-- - Uses DISTINCT to avoid duplicate rows in output

What the Interviewer Is Testing:

| Aspect | What They’re Looking For |

|---|---|

| Self-Join Mastery | Can you join a table to itself? Do you understand aliases? |

| Subquery Logic | Do you understand correlated subqueries vs. regular subqueries? |

| Edge Cases | Did you think about departments with <2 salaries? Tied salaries? |

| Explanation Ability | Can you walk through your solution step-by-step clearly? |

How to Explain This in an Interview:

“The key insight is that second highest salary is the maximum salary that’s still less than the maximum salary in the department.

My approach:

- Find the max salary per department (this is the highest)

- Filter salaries that are less than that max

- Find the max of those filtered salaries (now you have the second highest)

- Group by department to get one result per department

Edge case: If a department has only 1 salary, the WHERE clause finds nothing, so we return NULL—which is correct behavior.”

Question 2: Find Duplicate Emails and Show All Their Details, Including a Running Count

Interview Question:

“You have a users table with millions of rows. Find all duplicate emails (emails that appear more than once). For each duplicate email, list all users with that email AND add a column showing which occurrence it is (1st, 2nd, 3rd, etc.). Optimize for performance.”

The Interview Scenario:

This is testing:

- Can you identify duplicates (GROUP BY + HAVING)?

- Can you show ALL matching rows (not just the count)?

- Can you add ranking/numbering (self-join or window function)?

- Do you think about performance with millions of rows?

- Can you explain why your approach scales well or doesn’t?

Sample Data:

CREATE TABLE Users (

user_id INT PRIMARY KEY,

email VARCHAR(100),

name VARCHAR(100),

created_at DATETIME

);

INSERT INTO Users VALUES

(1, 'john@example.com', 'John Doe', '2020-01-01'),

(2, 'alice@example.com', 'Alice Smith', '2020-01-02'),

(3, 'john@example.com', 'John Johnson', '2020-06-01'), -- Duplicate email

(4, 'bob@example.com', 'Bob Wilson', '2020-02-01'),

(5, 'alice@example.com', 'Alice Brown', '2020-07-01'), -- Another duplicate

(6, 'alice@example.com', 'Alice White', '2020-12-01'); -- Third occurrence

-- Expected Output:

-- user_id | email | name | occurrence

-- 1 | john@example.com | John Doe | 1

-- 3 | john@example.com | John Johnson | 2

-- 2 | alice@example.com | Alice Smith | 1

-- 5 | alice@example.com | Alice Brown | 2

-- 6 | alice@example.com | Alice White | 3

Solution 1: Using Window Functions (Most Modern)

-- Best approach if window functions are allowed

WITH duplicate_emails AS (

SELECT

user_id,

email,

name,

ROW_NUMBER() OVER (PARTITION BY email ORDER BY created_at) as occurrence,

COUNT(*) OVER (PARTITION BY email) as email_count

FROM Users

)

SELECT

user_id,

email,

name,

occurrence

FROM duplicate_emails

WHERE email_count > 1

ORDER BY email, occurrence;

-- Why this works:

-- ROW_NUMBER() OVER (PARTITION BY email ORDER BY created_at)

-- = Assigns 1, 2, 3... to each email group, ordered by creation date

-- COUNT() OVER (PARTITION BY email)

-- = Counts total occurrences per email

-- WHERE email_count > 1

-- = Filters to only duplicate emails

-- Performance: O(n log n) due to sorting within partitions

Solution 2: Using Self-Join (Without Window Functions)

-- If window functions aren't available

SELECT

u1.user_id,

u1.email,

u1.name,

COUNT(u2.user_id) as occurrence

FROM Users u1

JOIN Users u2 ON u1.email = u2.email

AND u2.created_at <= u1.created_at -- Count only those created before/on this user

INNER JOIN (

-- First find which emails are duplicated

SELECT email

FROM Users

GROUP BY email

HAVING COUNT(*) > 1

) duplicates ON u1.email = duplicates.email

GROUP BY u1.user_id, u1.email, u1.name

ORDER BY u1.email, occurrence;

-- Why this works:

-- u1 = the user we're looking at

-- u2 = all users with same email created at or before u1

-- COUNT(u2.user_id) = how many such users exist = the occurrence number

-- duplicates subquery = filters to only duplicate emails

-- Performance: O(n²) in worst case (many duplicates), so less ideal for large datasets

Performance Comparison:

| Approach | Time Complexity | Space Complexity | Best For |

|---|---|---|---|

| Window Functions | O(n log n) | O(n) | Modern DBs, any dataset size |

| Self-Join | O(n²) | O(n) | Few duplicates, legacy DBs |

What the Interviewer Is Testing:

- Problem Breakdown: Can you understand the problem has 2 parts? (1) Find duplicates, (2) Number them

- Multiple Solutions: Do you know multiple approaches? Can you compare them?

- Performance Thinking: Will your solution work with 1 million rows? 100 million?

- Tool Selection: Do you know when to use window functions vs. self-joins?

- Explainability: Can you explain why one approach is better than another?

Question 3: Detect Fraud – Find Users With Logins From Impossible Locations

Interview Question:

“You have a login table with user_id, city, timestamp, and latitude/longitude. Write a query to detect when a user logs in from two cities that are too far apart in too short a time (physically impossible). For example, if a user logs in from New York at 10:00 AM and London at 10:15 AM (15 minutes apart, 3,500 miles), it’s fraud.”

The Interview Scenario:

This is a real business problem that tests:

- Can you work with geographic data (distance calculations)?

- Can you find patterns across multiple rows for the same user?

- Do you understand edge cases (what’s “too fast”)?

- Can you write production-grade code (not just toy examples)?

- Do you understand the tradeoffs between accuracy and false positives?

Sample Data:

CREATE TABLE LoginActivity (

login_id INT PRIMARY KEY,

user_id INT,

city VARCHAR(50),

latitude DECIMAL(9, 6),

longitude DECIMAL(9, 6),

login_time DATETIME

);

INSERT INTO LoginActivity VALUES

(1, 101, 'New York', 40.7128, -74.0060, '2024-01-15 10:00:00'),

(2, 101, 'Boston', 42.3601, -71.0589, '2024-01-15 10:20:00'), -- 215 miles in 20 min: OK

(3, 101, 'London', 51.5074, -0.1278, '2024-01-15 10:35:00'), -- 3,459 miles in 35 min: FRAUD

(4, 102, 'San Francisco', 37.7749, -122.4194, '2024-01-15 09:00:00'),

(5, 102, 'New York', 40.7128, -74.0060, '2024-01-15 14:00:00'), -- 2,900 miles in 5 hours: OK

(6, 103, 'Paris', 48.8566, 2.3522, '2024-01-15 12:00:00'),

(7, 103, 'Berlin', 52.5200, 13.4050, '2024-01-15 12:10:00'); -- 550 miles in 10 min: FRAUD

-- Expected Output:

-- user_id | city_from | city_to | time_diff_minutes | distance_miles | speed_mph

-- 101 | New York | London | 35 | 3459 | 5926 (impossible)

-- 103 | Paris | Berlin | 10 | 550 | 3300 (impossible)

Solution: Using Haversine Formula + Window Functions

WITH login_sequence AS (

SELECT

user_id,

city,

latitude,

longitude,

login_time,

LAG(city) OVER (PARTITION BY user_id ORDER BY login_time) as prev_city,

LAG(latitude) OVER (PARTITION BY user_id ORDER BY login_time) as prev_lat,

LAG(longitude) OVER (PARTITION BY user_id ORDER BY login_time) as prev_lon,

LAG(login_time) OVER (PARTITION BY user_id ORDER BY login_time) as prev_login_time

FROM LoginActivity

),

distance_calculated AS (

SELECT

user_id,

prev_city,

city,

-- Haversine formula: Calculate distance between two lat/lon points

-- Result in miles: multiply by 3959 (Earth radius in miles)

3959 * ACOS(

SIN(RADIANS(prev_lat)) * SIN(RADIANS(latitude)) +

COS(RADIANS(prev_lat)) * COS(RADIANS(latitude)) *

COS(RADIANS(prev_lon) - RADIANS(longitude))

) as distance_miles,

TIMESTAMPDIFF(MINUTE, prev_login_time, login_time) as time_diff_minutes

FROM login_sequence

WHERE prev_city IS NOT NULL -- Skip first login per user

)

SELECT

user_id,

prev_city as city_from,

city as city_to,

time_diff_minutes,

ROUND(distance_miles, 0) as distance_miles,

ROUND(distance_miles * 60 / time_diff_minutes, 0) as speed_mph,

CASE

WHEN distance_miles * 60 / time_diff_minutes > 600 THEN 'LIKELY FRAUD'

WHEN distance_miles * 60 / time_diff_minutes > 300 THEN 'SUSPICIOUS'

ELSE 'NORMAL'

END as fraud_score

FROM distance_calculated

WHERE distance_miles * 60 / time_diff_minutes > 300 -- Speed > 300 mph = suspicious

ORDER BY user_id, speed_mph DESC;

-- Key Concepts:

-- LAG() = window function to get previous row for same user

-- TIMESTAMPDIFF() = calculate time between logins

-- Haversine formula = great-circle distance between two points on Earth

-- 600 mph = definitely impossible

-- 300 mph = suspicious (fighter jet speeds)

-- Adjust thresholds based on your business needs

Optimizations for Production:

-- Add indexes for performance

CREATE INDEX idx_user_logintime ON LoginActivity(user_id, login_time);

-- For massive datasets, pre-calculate if you have millions of logins:

CREATE TABLE FraudDetectionCache (

user_id INT,

suspicious_logins INT,

last_checked DATETIME

);

-- In application, run expensive fraud query only once per hour per user

-- Cache results and alert only on NEW suspicious activity

-- Consider: Do you need geographic coordinates stored?

-- Could also store "region" (US, EU, Asia) for simpler logic

-- Trade-off: accuracy vs. performance

What the Interviewer Is Testing:

| Aspect | What They’re Looking For |

|---|---|

| Domain Knowledge | Do you know haversine formula? Can you Google it under pressure? |

| Window Functions | Can you use LAG() to compare current row with previous row? |

| Math Skills | Do you understand RADIANS, trigonometry? Can you follow a formula? |

| Production Thinking | Did you think about indexes? Performance? Tuning thresholds? |

| Communication | Can you explain why your thresholds are 300 mph and 600 mph? |

🎯 Want to Ace ANY SQL Interview?

Master 220+ moderate to hard SQL interview questions with detailed explanations, multiple solutions, and performance analysis.

Question 4: The Gap and Island Problem – Find Consecutive Login Dates

Interview Question:

“You have a table with user_id and login_date. Find all continuous periods where a user logged in on consecutive days. For example, if a user logged in on Jan 1, 2, 3, then Jan 6, 7 – output should show two ‘islands’ (Jan 1-3 and Jan 6-7). Show the start date, end date, and number of consecutive days for each island.”

Sample Data:

CREATE TABLE UserLogins (

user_id INT,

login_date DATE

);

INSERT INTO UserLogins VALUES

(101, '2024-01-01'), (101, '2024-01-02'), (101, '2024-01-03'),

(101, '2024-01-06'), (101, '2024-01-07'), -- Gap here

(102, '2024-01-01'), (102, '2024-01-02'), (102, '2024-01-03'),

(102, '2024-01-04'), (102, '2024-01-05');

-- Expected Output:

-- user_id | start_date | end_date | consecutive_days

-- 101 | 2024-01-01 | 2024-01-03 | 3

-- 101 | 2024-01-06 | 2024-01-07 | 2

-- 102 | 2024-01-01 | 2024-01-05 | 5

Solution: Using Window Functions to Identify Islands

WITH date_with_lag AS (

SELECT

user_id,

login_date,

LAG(login_date) OVER (PARTITION BY user_id ORDER BY login_date) as prev_date,

-- Calculate days since previous login

DATEDIFF(DAY,

LAG(login_date) OVER (PARTITION BY user_id ORDER BY login_date),

login_date

) as days_gap

FROM UserLogins

),

island_identifier AS (

SELECT

user_id,

login_date,

-- If gap is NULL (first record) or gap is 1 (consecutive), same group

-- Otherwise, start a new group

SUM(CASE

WHEN days_gap IS NULL OR days_gap = 1 THEN 0

ELSE 1

END) OVER (PARTITION BY user_id ORDER BY login_date) as island_id

FROM date_with_lag

)

SELECT

user_id,

MIN(login_date) as start_date,

MAX(login_date) as end_date,

DATEDIFF(DAY, MIN(login_date), MAX(login_date)) + 1 as consecutive_days

FROM island_identifier

GROUP BY user_id, island_id

ORDER BY user_id, start_date;

-- How this works:

-- 1. LAG() gets previous login date for each user

-- 2. DATEDIFF calculates gap between consecutive logins

-- 3. Create a running sum of "breaks" (when gap > 1)

-- This creates "islands" - groups with same island_id are consecutive dates

-- 4. GROUP BY island to aggregate each island into one row

-- 5. MIN/MAX gets the boundaries, DATEDIFF + 1 gives total days

Why This Pattern Matters:

The “gap and island” pattern appears in many interview questions:

- Session identification (consecutive page visits)

- Uptime detection (consecutive days server was online)

- Order streaks (consecutive purchases by a customer)

- Bug detection (consecutive failed transactions)

Understanding this pattern allows you to solve 10+ variations of similar problems.

Question 5: Running Total with Reset – Calculate Cumulative Revenue Until Order Cancellation

Interview Question:

“You have a transactions table with transaction_id, user_id, amount, and type (PURCHASE, REFUND, CANCEL). Calculate running total revenue for each user, but RESET it to zero whenever a CANCEL transaction occurs. Show the cumulative amount at each transaction.”

Sample Data:

CREATE TABLE Transactions (

transaction_id INT PRIMARY KEY,

user_id INT,

amount DECIMAL(10, 2),

type VARCHAR(20), -- PURCHASE, REFUND, CANCEL

created_at DATETIME

);

INSERT INTO Transactions VALUES

(1, 101, 100, 'PURCHASE', '2024-01-01 10:00:00'),

(2, 101, 50, 'PURCHASE', '2024-01-01 11:00:00'),

(3, 101, 30, 'REFUND', '2024-01-01 12:00:00'),

(4, 101, 0, 'CANCEL', '2024-01-01 13:00:00'), -- Reset cumulative

(5, 101, 75, 'PURCHASE', '2024-01-01 14:00:00'),

(6, 102, 200, 'PURCHASE', '2024-01-01 10:00:00');

-- Expected Output:

-- user_id | transaction_id | type | amount | running_total

-- 101 | 1 | PURCHASE | 100 | 100

-- 101 | 2 | PURCHASE | 50 | 150

-- 101 | 3 | REFUND | -30 | 120

-- 101 | 4 | CANCEL | 0 | 0 (RESET)

-- 101 | 5 | PURCHASE | 75 | 75 (Starts fresh)

-- 102 | 6 | PURCHASE | 200 | 200

Solution: Using Window Functions with Reset Groups

WITH transaction_with_groups AS (

SELECT

transaction_id,

user_id,

CASE type

WHEN 'REFUND' THEN -amount

ELSE amount

END as signed_amount,

-- Mark cancellations

CASE WHEN type = 'CANCEL' THEN 1 ELSE 0 END as is_cancel,

-- Create "reset groups" - every time we see a CANCEL, increment group

SUM(CASE WHEN type = 'CANCEL' THEN 1 ELSE 0 END)

OVER (PARTITION BY user_id ORDER BY created_at) as reset_group

FROM Transactions

)

SELECT

user_id,

transaction_id,

CASE

WHEN is_cancel = 1 THEN 'CANCEL'

WHEN signed_amount < 0 THEN 'REFUND'

ELSE 'PURCHASE'

END as type,

ABS(signed_amount) as amount,

-- SUM of signed amounts within each reset group

SUM(signed_amount) OVER (

PARTITION BY user_id, reset_group

ORDER BY created_at

) as running_total

FROM transaction_with_groups

ORDER BY user_id, transaction_id;

-- Key Insight:

-- 1. Create a running count of CANCEL transactions (reset_group)

-- 2. Partition by both user AND reset_group

-- 3. This creates separate windows for each period between cancellations

-- 4. Each window's SUM resets at the next CANCEL

Real-World Application:

This pattern is used in:

- Payment Processing: Track cumulative charges until charge-back

- Subscription Billing: Track lifetime value until cancellation

- Manufacturing: Track production count until quality reset

- Gaming: Track streaks until player loses/quits

Question 6: Find Sessions Within Sessions – Nested Time Windows

Interview Question:

“A user’s browsing session ends after 30 minutes of inactivity. You have page_view_id, user_id, and timestamp. Identify each session and show for each user: total sessions, longest session duration, and URLs visited in each session. Handle edge cases like midnight boundaries.”

Sample Data:

CREATE TABLE PageViews (

page_view_id INT PRIMARY KEY,

user_id INT,

url VARCHAR(255),

timestamp DATETIME

);

INSERT INTO PageViews VALUES

(1, 101, '/home', '2024-01-01 10:00:00'),

(2, 101, '/products', '2024-01-01 10:05:00'),

(3, 101, '/cart', '2024-01-01 10:10:00'),

(4, 101, '/home', '2024-01-01 10:50:00'), -- > 30 min gap: NEW SESSION

(5, 101, '/checkout', '2024-01-01 10:55:00'),

(6, 102, '/home', '2024-01-01 10:00:00'),

(7, 102, '/products', '2024-01-01 10:20:00');

-- Expected Output:

-- user_id | session_id | start_time | end_time | duration_minutes | urls_visited

-- 101 | 1 | 2024-01-01 10:00:00 | 2024-01-01 10:10:00 | 10 | /home, /products, /cart

-- 101 | 2 | 2024-01-01 10:50:00 | 2024-01-01 10:55:00 | 5 | /home, /checkout

-- 102 | 1 | 2024-01-01 10:00:00 | 2024-01-01 10:20:00 | 20 | /home, /products

Solution: Identify Sessions by Detecting Inactivity Gaps

WITH time_gaps AS (

SELECT

page_view_id,

user_id,

url,

timestamp,

LAG(timestamp) OVER (PARTITION BY user_id ORDER BY timestamp) as prev_timestamp,

-- Calculate minutes since last page view

TIMESTAMPDIFF(MINUTE,

LAG(timestamp) OVER (PARTITION BY user_id ORDER BY timestamp),

timestamp

) as minutes_since_last

FROM PageViews

),

session_groups AS (

SELECT

page_view_id,

user_id,

url,

timestamp,

-- Create session breaks when gap > 30 minutes

SUM(CASE

WHEN minutes_since_last IS NULL OR minutes_since_last > 30 THEN 1

ELSE 0

END) OVER (PARTITION BY user_id ORDER BY timestamp) as session_id

FROM time_gaps

)

SELECT

user_id,

session_id,

MIN(timestamp) as start_time,

MAX(timestamp) as end_time,

TIMESTAMPDIFF(MINUTE, MIN(timestamp), MAX(timestamp)) as duration_minutes,

STRING_AGG(url, ', ') as urls_visited, -- For MySQL use GROUP_CONCAT(url)

COUNT(*) as page_views

FROM session_groups

GROUP BY user_id, session_id

ORDER BY user_id, session_id;

-- Edge Case Handling:

-- 1. Midnight boundary: TIMESTAMPDIFF automatically handles date changes

-- 2. Single page sessions: duration_minutes will be 0 (correct)

-- 3. Very fast navigation: gap < 1 minute = 0, same session (correct)

-- 4. No activity for hours: gap > 30 = new session (correct)

Variations You Might Encounter:

- 30-minute vs. 15-minute sessions: Change the threshold

- Session value: Add SUM(transaction_amount) to find session value

- Bounce rate: Sessions with only 1 page view = bounce

- Conversion funnel: Find sessions that have specific URL sequences

Question 7: Complex Aggregation – Find Top Products by Revenue, But Only Count Profitable Orders

Interview Question:

“Find top 10 products by revenue. But only count orders where (selling_price – cost) > 10. Additionally, show: total orders, orders counted, orders excluded, average profit margin for counted orders. Order by revenue descending.”

Sample Data:

CREATE TABLE Orders (

order_id INT PRIMARY KEY,

product_id INT,

quantity INT,

selling_price DECIMAL(10, 2),

cost DECIMAL(10, 2)

);

INSERT INTO Orders VALUES

(1, 'PROD_A', 5, 50, 30), -- Profit: 20 > 10 ✓

(2, 'PROD_A', 3, 50, 45), -- Profit: 5 ≤ 10 ✗

(3, 'PROD_A', 2, 50, 35), -- Profit: 15 > 10 ✓

(4, 'PROD_B', 10, 100, 85), -- Profit: 15 > 10 ✓

(5, 'PROD_B', 8, 100, 95); -- Profit: 5 ≤ 10 ✗

-- Expected Output:

-- product_id | total_orders | counted_orders | excluded_orders | total_revenue_counted | avg_profit_margin

-- PROD_A | 3 | 2 | 1 | 500 | 17.5%

-- PROD_B | 2 | 1 | 1 | 1000 | 15%

Solution: Conditional Aggregation

SELECT

product_id,

COUNT(*) as total_orders,

-- Count only profitable orders

SUM(CASE

WHEN (selling_price - cost) > 10 THEN 1

ELSE 0

END) as counted_orders,

-- Count excluded (not profitable)

SUM(CASE

WHEN (selling_price - cost) <= 10 THEN 1

ELSE 0

END) as excluded_orders,

-- Revenue from profitable orders only

SUM(CASE

WHEN (selling_price - cost) > 10 THEN selling_price * quantity

ELSE 0

END) as total_revenue_counted,

-- Average profit margin for profitable orders

ROUND(

AVG(CASE

WHEN (selling_price - cost) > 10

THEN ((selling_price - cost) / selling_price) * 100

ELSE NULL -- Exclude non-profitable from average

END),

2

) as avg_profit_margin_pct

FROM Orders

GROUP BY product_id

HAVING SUM(CASE WHEN (selling_price - cost) > 10 THEN 1 ELSE 0 END) > 0

ORDER BY total_revenue_counted DESC

LIMIT 10;

-- Key Techniques:

-- 1. Conditional aggregation with CASE/WHEN/SUM

-- 2. Multiple CASE statements for different metrics

-- 3. HAVING clause to filter after aggregation

-- 4. Only include in AVG calculation (ELSE NULL) for accurate margins

Question 8: Recursive Hierarchies – Find All Managers in the Chain of Command

Interview Question:

“You have an employees table with employee_id, name, and manager_id (self-referencing). Write a query that for a given employee, finds ALL managers in the chain of command (direct manager, manager’s manager, etc.) up to the CEO. Include the chain depth (1 for direct manager, 2 for manager’s manager, etc.).”

Sample Data:

CREATE TABLE Employees (

employee_id INT PRIMARY KEY,

name VARCHAR(100),

manager_id INT,

FOREIGN KEY (manager_id) REFERENCES Employees(employee_id)

);

INSERT INTO Employees VALUES

(1, 'Alice', NULL), -- CEO (no manager)

(2, 'Bob', 1), -- Reports to Alice

(3, 'Charlie', 2), -- Reports to Bob

(4, 'Diana', 2), -- Reports to Bob

(5, 'Eve', 3), -- Reports to Charlie

(6, 'Frank', 4); -- Reports to Diana

-- Tree Structure:

-- Alice (1)

-- └─ Bob (2)

-- ├─ Charlie (3)

-- │ └─ Eve (5)

-- └─ Diana (4)

-- └─ Frank (6)

-- Query: Find all managers for employee_id = 5 (Eve)

-- Expected Output:

-- employee_id | name | depth

-- 3 | Charlie | 1 (direct manager)

-- 2 | Bob | 2 (manager's manager)

-- 1 | Alice | 3 (CEO)

Solution: Using Recursive CTE (WITH RECURSIVE)

WITH RECURSIVE manager_chain AS (

-- ANCHOR: Start with the employee

SELECT

employee_id,

name,

manager_id,

1 as depth

FROM Employees

WHERE employee_id = 5 -- Change to find chain for any employee

AND manager_id IS NOT NULL -- Only if they have a manager

UNION ALL

-- RECURSIVE: Get each successive manager

SELECT

e.employee_id,

e.name,

e.manager_id,

mc.depth + 1

FROM Employees e

INNER JOIN manager_chain mc ON e.employee_id = mc.manager_id

WHERE e.manager_id IS NOT NULL -- Stop at CEO (no manager)

)

SELECT

employee_id,

name,

depth

FROM manager_chain

ORDER BY depth;

-- CRITICAL CONCEPT:

-- 1. First SELECT (ANCHOR): Starts with the target employee's manager

-- 2. UNION ALL: Combines with recursive results

-- 3. Recursive SELECT: Joins back to find next manager in chain

-- 4. Recursion stops when manager_id IS NULL (reached CEO)

-- 5. depth increments with each level

Common Variations:

-- Variation 1: Find all REPORTS for a given manager (top-down)

WITH RECURSIVE employee_hierarchy AS (

SELECT employee_id, name, manager_id, 1 as depth

FROM Employees

WHERE manager_id = 2 -- Find all reporting to Bob

UNION ALL

SELECT e.employee_id, e.name, e.manager_id, eh.depth + 1

FROM Employees e

INNER JOIN employee_hierarchy eh ON e.manager_id = eh.employee_id

)

SELECT * FROM employee_hierarchy ORDER BY depth;

-- Variation 2: Build full path (Alice > Bob > Charlie > Eve)

WITH RECURSIVE manager_path AS (

SELECT

employee_id,

name,

manager_id,

CONCAT(name) as path,

1 as depth

FROM Employees

WHERE employee_id = 5 AND manager_id IS NOT NULL

UNION ALL

SELECT

e.employee_id,

e.name,

e.manager_id,

CONCAT(e.name, ' > ', mp.path),

mp.depth + 1

FROM Employees e

INNER JOIN manager_path mp ON e.employee_id = mp.manager_id

WHERE e.manager_id IS NOT NULL

)

SELECT * FROM manager_path ORDER BY depth DESC;

-- Result: "Alice > Bob > Charlie > Eve"

Performance Considerations:

- Infinite loops: Always have a termination condition (WHERE clause)

- Deep hierarchies: Use MAX_RECURSION_DEPTH or LIMIT to prevent runaway queries

- Denormalization: For very deep trees, consider storing “path” in the table

- Indexing: Index manager_id for faster joins in recursive step

Still Struggling With SQL Interviews?

These 8 questions are just the beginning. There are hundreds more patterns and edge cases you need to practice.

Get access to our complete e-book with 220+ SQL interview questions covering:

- Window functions mastery

- Complex joins and subqueries

- Optimization techniques

- Real business problems

- Step-by-step solutions

Unlock The Complete Interview Guide →

Interview Tips for These Hard Questions

✅ What Interviewers Want to Hear:

- “Can I clarify the requirements?” – Shows you don’t make assumptions

- “Let me think about the edge cases first…” – Shows mature thinking

- “This approach will work, but let me show you a better one…” – Shows depth of knowledge

- “On a 10 million row table, this would be slow because…” – Shows production thinking

- “Here’s the tradeoff: accuracy vs. performance…” – Shows nuanced understanding

- “I’d add indexes on…” – Shows you think about optimization

❌ What They DON’T Want to Hear:

- “Let me just code this…” (without planning first)

- “I don’t know window functions” (they’re standard now)

- “This query takes 10 seconds on 1M rows, but that’s fine” (no, it’s not)

- “I’ll just SELECT * and filter in the application” (classic mistake)

- “I’ve never heard of that SQL feature” (ok to say, but learn it before interview)

How to Prepare for These Questions:

- Practice on LeetCode/HackerRank: Daily SQL problems build muscle memory

- Understand the ‘why’: Not just syntax, but why one approach beats another

- Test with real data: Set up a local database with millions of rows

- Explain out loud: Practice explaining your solutions as if interviewing

- Think about edge cases: Empty tables, NULL values, duplicates, large datasets

- Learn query plans: EXPLAIN helps you understand what database is doing

Key Takeaways

- Master window functions: LAG, LEAD, ROW_NUMBER, RANK, SUM OVER, etc.

- Understand recursive CTEs: They’re simpler than you think, very powerful

- Know performance patterns: Self-joins vs. window functions, when to use each

- Think about edge cases: Empty results, NULL handling, boundary conditions

- Practice explaining: Code is easy, explaining clearly is hard

- Know multiple solutions: Show you can solve problems different ways

- Production thinking: Indexes, query plans, 10M row implications matter

Ready to Master SQL Interviews?

These 8 questions represent common patterns across hundreds of variations. The best way to prepare is to practice consistently with diverse problems.

Want 2,200+ More Questions Like This?

The Data Monk has compiled the most comprehensive analytics interview question bank — SQL, Python, ML, Statistics & Case Studies. Built by 50+ experts from Amazon, Flipkart & OYO.